Objective

MOVIN integrates NVIDIA SOMA into its LiDAR-centric motion capture pipeline to deliver human motion data in a standardized parametric representation for physical AI—spanning robotics simulation, humanoid control, teleoperation, and embodied AI training.

Customer

MOVIN Inc.

Use Case

Physical AI

Products

NVIDIA SOMA, NVIDIA Isaac Sim, NVIDIA Isaac Lab

Key Takeaways

- MOVIN uses LiDAR-centric markerless motion capture to stream full-body human motion in real time at 60 FPS with 2–3 cm accuracy.

- Integration with NVIDIA SOMA maps captured motion into a standardized 77-joint parametric human skeleton for downstream physical AI workflows.

- A calibration-time SOMA Layer server generates actor-specific skeleton configurations via GPU inference, keeping the runtime pipeline lightweight.

- The MOVIN Python SDK and platform plugins enable direct streaming into NVIDIA Isaac Sim, Isaac Lab, and MuJoCo with automatic coordinate conversion.

- Captured motion supports physical AI applications including teleoperation, humanoid robot retargeting, demonstration data collection, embodied AI training, and sim-to-real transfer.

The Challenge: Bridging Motion Capture and Physical AI

Human motion data is becoming an increasingly critical resource for physical AI. Training humanoid robots to walk, building world models that understand human behavior, and developing embodied AI systems that interact with real-world environments—all rely on large-scale, high-quality demonstrations of how humans move, manipulate, and navigate physical spaces.

Yet capturing that motion and delivering it into physical AI pipelines remains a fragmented process. Traditional motion capture systems produce skeleton hierarchies, body models, and coordinate conventions that vary from one tool to the next. A motion clip captured in one system typically cannot be used directly in a simulation environment such as NVIDIA Isaac Sim or MuJoCo without manual retargeting, coordinate conversion, and skeleton remapping.

This friction slows down the development cycle across a broad range of physical AI applications—from humanoid locomotion and teleoperation to imitation learning and world model training.

The Solution: NVIDIA SOMA Integration

NVIDIA SOMA addresses this challenge by providing a unified parametric human representation. SOMA defines a standardized 77-joint skeleton with a parametric body model that encodes both body shape and pose. By establishing a common foundation for how human motion is represented, SOMA enables motion data, tools, and simulation platforms to interoperate within the same ecosystem—from data capture through simulation to physical AI training.

MOVIN integrates SOMA directly into its LiDAR-centric motion capture pipeline so that human motion captured in the real world flows seamlessly into physical AI–ready formats—without manual conversion steps.

LiDAR-Centric Motion Capture Designed for Physical AI

MOVIN is a KOREA AI startup building LiDAR-centric motion capture technology for real-time human motion tracking. While the technology serves industries spanning content production and interactive media, its design is particularly well suited for physical AI workflows—robotics, humanoid control, embodied AI, and world model training—where spatially accurate, real-time motion data is essential.

The core capture system, MOVIN TRACIN, performs AI driven real-time markerless full-body motion capture using a compact sensing setup: a single 3D LiDAR sensor, an RGB camera, and an NVIDIA Jetson module for on-device AI inference. Unlike traditional optical motion capture systems that require multiple synchronized cameras and controlled studio environments, MOVIN TRACIN works with a single LiDAR sensor that captures dense 3D point clouds with explicit XYZ positions. This makes it possible to capture human motion using spatially grounded measurements in ordinary indoor/outdoor environments—offices, warehouses, labs—rather than purpose-built capture stages.

The system tracks a full-body skeleton consisting of 22 body joints and 15 hand joints per hand, streaming motion data at approximately 60 frames per second with around 100 milliseconds of end-to-end latency. In internal evaluations against optical motion capture references, MOVIN reports approximately 2–3 cm positional deviation, making the system suitable for physical AI workflows that demand reliable, metrically accurate motion data.

System Architecture: From Capture to Simulation

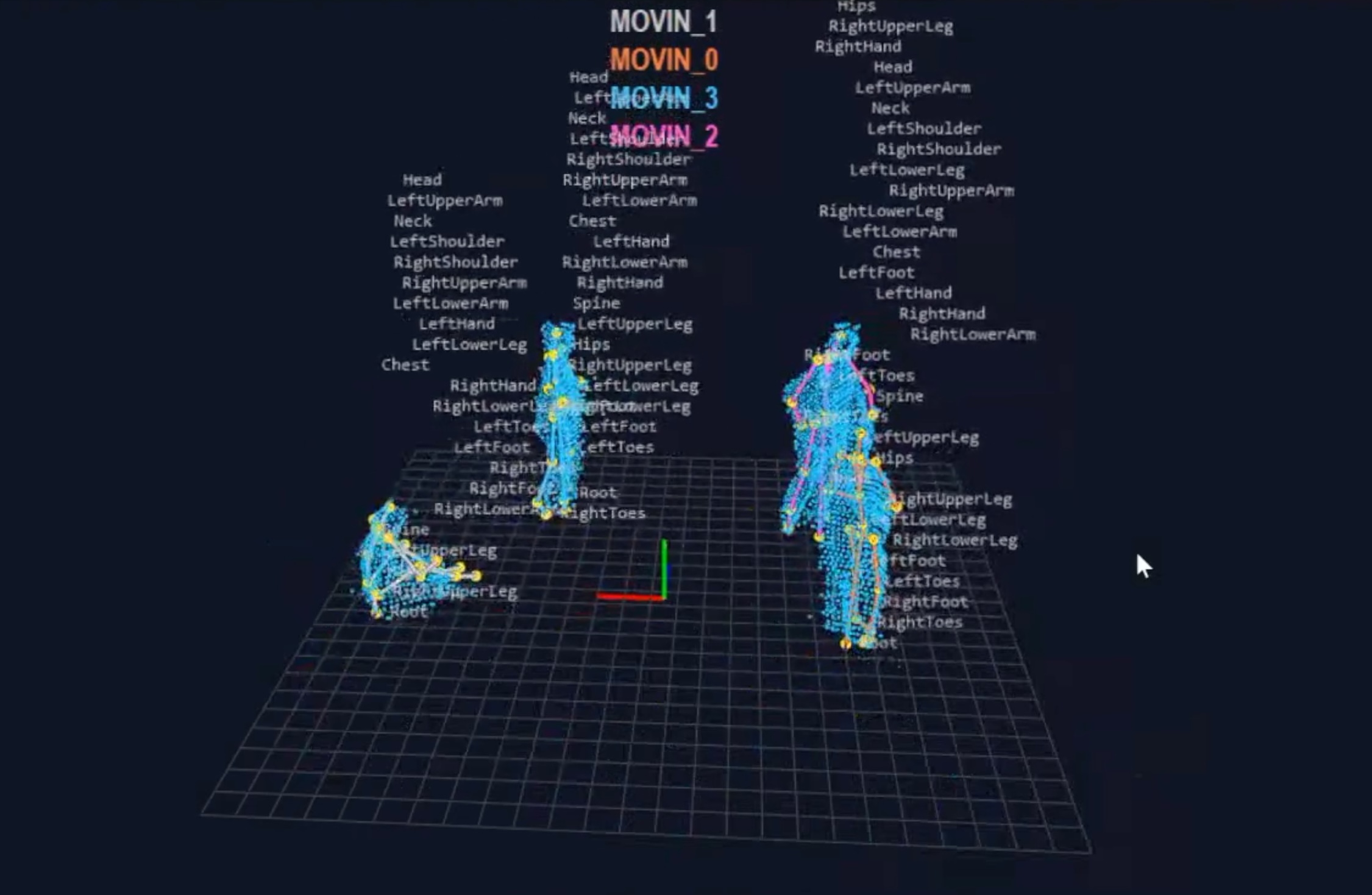

The integration between MOVIN and SOMA spans three stages: calibration-based skeleton adaptation, real-time motion retargeting, and platform-specific streaming into simulation environments.

.png)

Calibration-Based Skeleton Adaptation. When an actor enters the capture volume, MOVIN Studio runs a calibration step to estimate the actor’s body shape. The system derives MHR parameters—a set of 45 body shape coefficients and 75 scale parameters—from the LiDAR and camera observations. These parameters are sent via HTTP to the SOMA Layer server, a GPU-backed inference service that loads the SOMA parametric model. On the server side, the SOMALayer model receives the MHR coefficients and scale values, computes the actor-specific T-pose skeleton via forward kinematics and skinning, and returns the resulting 77 joint local positions and global transforms as a JSON response. Because this skeleton adaptation occurs once per actor calibration rather than every frame, the SOMA inference step introduces no runtime overhead.

Real-Time Motion Retargeting. After calibration, MOVIN captures human motion in real time and applies its retargeting algorithm to map each frame from the MOVIN internal skeleton representation to the SOMA 77-joint hierarchy. The retargeted motion includes per-joint 3×3 rotation matrices and root translation for each frame. The motion data is transmitted over a UDP-based streaming protocol using OSC (Open Sound Control) messages. Because a single SOMA frame exceeds the typical UDP packet size limit, MOVIN Studio splits each frame into chunked OSC packets. The MOVIN Python SDK reassembles these chunks on the receiving end, reconstructing complete pose frames from the stream.

Platform Plugins. Once the SDK has assembled a complete SOMA frame, platform-specific plugins apply the final transformations needed for each target simulation environment—including coordinate system conversion (Y-up to Z-up), joint orientation correction for MJCF-based articulations, and DOF reordering. The current release includes plugins for NVIDIA Isaac Sim, NVIDIA Isaac Lab, and MuJoCo, with the architecture designed to support additional physical AI simulation platforms.

Results: End-to-End Physical AI Pipeline

Driving a SOMA human body model in NVIDIA Isaac Lab

The MOVIN Python SDK / Plugin demonstrates the full pipeline from motion capture to simulation. It connects to MOVIN Studio over UDP and streams captured motion into Isaac Lab in real time. The SOMA skeleton is instantiated as an MJCF-based articulation in the simulation environment, and each incoming frame updates the articulation’s root pose and joint DOF values at 60 FPS. An optional SOMA surface mesh overlay provides higher visual fidelity by rendering the parametric body model alongside the skeleton.

Humanoid Robot Retargeting

Beyond driving a SOMA humanoid avatar, the pipeline supports real-time retargeting to humanoid robot models—a key capability for physical AI systems that learn from human demonstrations. It enables inverse kinematics–based retargeting from the SOMA skeleton to robot-specific joint configurations.

The retargeter maps SOMA joint positions through an FK chain to compute target end-effector positions, then solves IK for the robot’s kinematic structure. The system supports configurable human height scaling and multiple display modes—side-by-side comparison, robot-only view, or overlaid visualization—enabling researchers to evaluate how human demonstrations map onto specific robot morphologies. This capability is particularly relevant for imitation learning and teleoperation workflows, where human motion demonstrations need to be mapped onto robots with different limb proportions, joint limits, and degrees of freedom.

Multimodal Data Collection for Embodied AI

MOVIN’s LiDAR-centric capture system produces more than skeleton data alone. Because the system operates on raw 3D point clouds and RGB images, it can simultaneously collect temporally and spatially aligned multimodal data: motion capture skeletons, LiDAR point clouds, and camera imagery, all registered in the same coordinate frame and synchronized in time.

This multimodal data is valuable for training the next generation of physical AI systems—embodied agents, world models, and perception modules that need to learn from rich human experience data rather than motion trajectories alone. For hand manipulation tasks, MOVIN TRACIN provides its own hand capture model, and the system also supports integration with third-party hand tracking devices such as Meta Quest, Manus, and Rokoko for higher-fidelity manipulation data.

Looking Ahead

By combining LiDAR-centric motion capture with NVIDIA SOMA’s unified parametric human representation, MOVIN enables a streamlined path from real-world human motion capture to physical AI pipelines. The integration eliminates the manual retargeting and format conversion that typically stands between capturing human demonstrations and using them to train physical AI systems—whether for humanoid robot control, embodied agent training, or world model development.

Developers can capture human motion in ordinary environments, stream it in real time into NVIDIA Isaac Sim or Isaac Lab, retarget it to humanoid robots, collect multimodal demonstration data, and record datasets for offline training—all using a compact, portable hardware setup and a standardized data representation.

Future development directions include native SOMA layer integration within the MOVIN Studio application, SOMA-format motion data export for offline dataset creation, and expanded support for additional physical AI simulation platforms and robot morphologies.

Learn more about NVIDIA SOMA and NVIDIA Isaac Sim. To explore the MOVIN motion capture system, visit movin3d.com.